What do you normally do when you search for information from the internet? Experts would say, “Google it”. In other words, you type out a few words regarding your search on the search engine bar. Within a matter of seconds, you get your search results in the form of an index. This example would make things clear.

Let us suppose you are searching for the best bar in Los Angeles. You type out the words, ‘best bar in Los Angeles’ on the search engine. This is what you get in 1.06 seconds.

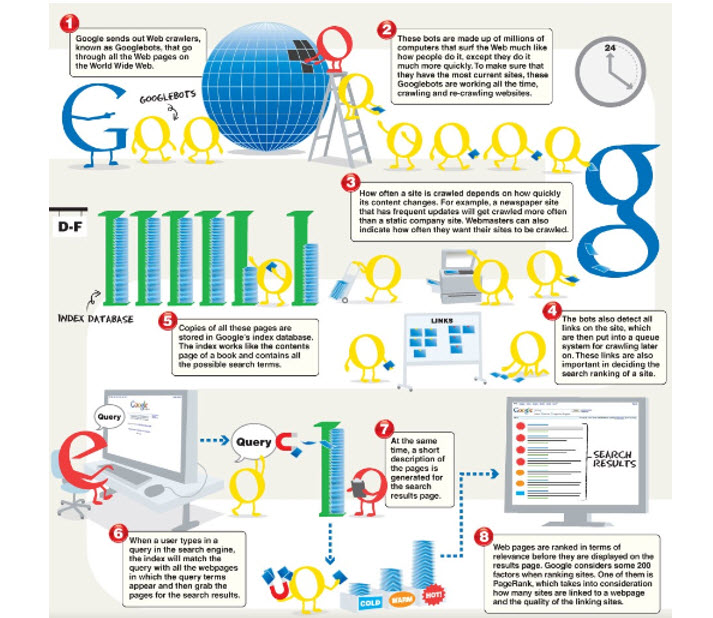

The above example shows that you have access to 153 million search results in 1.06 seconds. It is a mind-blogging figure. How does Google search for this information at such rapid speeds? This graphic will explain the mechanics behind the search.

Source: https://medium.com/@BrillMindzS/need-to-know-more-about-the-technology-behind-googlebot-2bdaecbbac37

This is the concept of Googlebot. This brings us to the question of what a Googlebot actually is. In simple terms, Google bot is a web crawling software search tool used to gather information from innumerable websites and supply Google search engine result pages.

How does Googlebot work?

Though it is an intricate process, it might look very simple on paper. Also known as ‘spider’ or ‘web crawler’, Googlebot uses sitemaps and documents from the web like links to databases to build Google’s search index. In the process, Googlebot keeps on discovering new web pages and updates it regularly to the existing pages. The index is Google’s brain. Googlebot is the tool that fuels this brain. Googlebot is Google’s web crawler. Similarly, other search engines have their individual web crawlers.

The difference between crawling and indexing:

Many people confuse between the terms, ‘crawling’ and ‘indexing’. They constitute it to mean the same whereas it is not. Crawling is the first step towards indexing. It literally means searching for information on the internet whereas indexing is the final result after the search. Hence, every page that is indexed has to be crawled whereas the converse is not necessary. Googlebot does the job of crawling through the websites for the purpose of indexing.

How does Googlebot crawl through the webpages?

The process involves browsing through a list of web address from previous crawls as well as sitemaps provided by website owners. These websites have various links. The crawlers use these links to discover more pages. Googlebot pays great attention to new websites, dead links, and changes to existing websites. The principal responsibility of Googlebot is to gather information from across billions of web pages to enable Google Search to organize it in the search index.

How do you make website content readable?

Googlebot skims through the content and searches for the appropriate keywords. It becomes imperative for your website to have quality content. What constitutes quality content? Ensure that your website content has these following strong qualities.

- Strong headlines – Grabbing the attention of the customer is of utmost importance. There cannot be a better way than having strong headlines.

- Use subheads – Summarizing the contents of your paragraph in the subheads is a good way to attract the attention of the reader.

- Bullets and numbering – Bulleting the important parts of the content separates it from the rest. Numbering is useful when you wish to present your content in an orderly manner.

- Short sentences and paragraphs – You will be able to communicate better with the clients using short sentences. Googlebot finds it easy to pick up data from such content.

- Use quality keywords – Googlebot pays a lot of attention to keywords. Investing in a strong keyword strategy is the key to success.

How do you get Google to crawl your website faster?

One of the main factors that influence Googlebot to crawl your website is the quality of the links pointing towards your website. If you have authority websites linking to your content, Googlebot treats it as important content. They do not like to waste time over unimportant content. This explains the importance of securing quality backlinks to your website.

What are the measures to take to rank high in SEO?

Securing a high rank on the SERPs is essential for any business. Web crawlers like Googlebot need to visit your website as crawling is a pre-requisite for indexing the web pages. You need to fix these three aspects to ensure that your website ranks high on the SEO front.

- Speed of the server – The crawl rate on the Google Search Console will give you an idea about the speed of the server. In case you are working on a slow server, you should switch over to a faster option.

- Errors on your website – If your website has a lot of errors, it affects the crawling speed. Fixing these errors like the 404 errors can help in improving the crawl rate. You can use the 301 redirects to link these pages to proper URLs on your website.

- Number of URLs on your website – The higher the number of URLs on your website, the slower is the crawling rate. Ensure to optimize the number of URLs in order to get a better performance.

Inference:

Crawling is of utmost importance if you wish to improve the visibility of your website. Optimizing web crawlers like Googlebot is essential to secure a higher rank on the SERPs.

This article is written by Shishir. He is an ex-startup entrepreneur currently working on kickstarting inbound marketing for a Silicon Valley startup. Cracked the code of generating 750K monthly traffic in 10 months by using creative content.

Comment Policy

Your words are your own, so be nice and helpful if you can. Please, only use your REAL NAME, not your business name or keywords. Using business name or keywords instead of your real name will lead to the comment being deleted. Anonymous commenting is not allowed either. Limit the amount of links submitted in your comment. We accept clean XHTML in comments, but don't overdo it please. You can wrap code in [lang-name][/lang-name] tags.