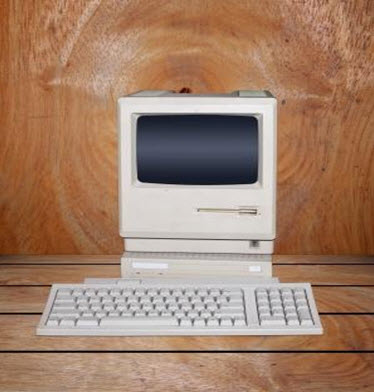

The microcomputer revolution first started around the year of 1977. Although we are all accustomed to the small and ideal sized computers of modern times, the first microcomputer was what we would describe as massive. The name of the revolution comes from the microprocessors that were used, essentially allowing the computer to come into the household. Up until this point, computers were used by businesses such as banks.

Microcomputers in the household

Microcomputers in the household

Microcomputers became the talk of the town once it entered the home. Theories of how the world would be in twenty years sprouted from every corner, and the possibilities seemed endless. The idea of using different databases for different tasks was born, as well as the concept of a multi-tasking robot to do your day to day duties. It was expected that if you needed medical advice you got to a medical database and newspapers would be personalised to your needs. This isn’t far from what is happening today. A website with medical information is in a sense a database and there are apps like Summly where you can choose the news you see.

The idea of a robot that would take out our household waste seems futuristic even to this day, regardless of the fact we have robots like Roomba cleaning up our floors. Just the other day, I saw a Twitter enabled coffee pot which was created using a modern day microcontroller (Arduino). High school science projects using arduinos have been in the news recently proving their accessibility. The theories proposed back in the day seemed far fetched, but it’s actually surprising how accurate they were.

Microcontrollers such as the Arduino Uno have shaped the direction of microcomputers in a different way than microprocessors. The two are often confused as they look very similar but microcontrollers focus on specific tasks like making a keyboard’s input and output work, while microprocessors are designed to make unspecific tasks succeed, like developing video games and software. Both device uses have driven the microcomputer to the state it is currently at, and have made it accessible for a number of people.

The companies involved

During the outbreak of the Microcomputer, three companies were in fierce competition. IBM, Apple and Microsoft all wanted to win the market but Microsoft claimed the prize due to its price. Office managers had the choice to buy these superior Apple models or Microsoft counterparts. The fact you could get nearly double the amount of Microsoft computers compared to Apple computers meant that Microsoft inevitably won the war. IBM went out of the picture because they decided to make their computers out of standard parts that anyone could buy.

IBM bought Microsoft’s Disk Operating System (MS-DOS) but due to the T&Cs and the way IBM operated meant Microsoft could still sell their DOS. This meant that two of the same operating systems were on the market. MS-DOS went on to captivate the imaginations of its users and became the step that the company needed to become a superpower technology company.

The end of the revolution?

The revolution came to an end when the MS-DOS became widely popular and used on IBM machines. This consolidated the microcomputer movement and offices soon became filled with MS_DOS devices.

The invention of the internet and 3D graphics caused the growth in the popularity of the computer. In an article from the Guardian, it is said that 14% of adults in the United Kingdom haven’t used the internet. Compared to when the microcomputer revolution was taking place, this stat seems small. Our dependance on the computer in modern day life is frightfully high but has increased opportunity. Without the first microcomputer all those years ago, we may never have had the opportunity to Instagram our breakfasts each morning.

Image courtesy of Exsodus at freedigitalphotos.net